Lens was envisioned as an intelligent system of aggregating live social feeds about brands and identifying the moods of the messages. These moods are classified into five categories: joy, sadness, fear, anger, and disgust. Each is represented as a single particle of brand logo constellations, allowing a vast space to contain thousands of feeds the user can explore.

The team consisted of three people, and I took the major responsibility of delivering a prototype. To find out the ideal way of interaction, I experimented with Leap Motion as a controlling device and made some visual design attempts with the art director. We eventually landed on a large immersive touch-screen experience with 3D graphics and navigation capabilities.

For the machine to parse through the general attitudes of social feeds, we used Crimson Hexagon, a social media analytics tool targeting brands and agencies.

To be affordable and achievable with making the prototype, I designed a gesture-controlled application for the iPad Pro with a 12.9-inch display. It illustrated the main use flow and presented high-fidelity visual elements and transitions.

I leveraged the mobile GPU for rendering 2,000 moving particles at 60 FPS with custom GLSL shaders. Optimization is tricky because some of the shader attributes. For example, positions of the particles are no longer able to transfer back to JavaScript once manipulated from vertex shaders. So I carefully schemed out the rendering logic between shaders and JavaScript, ensuing great flexibilities when dealing with moving particles by exposing their key attributes to JavaScript.

There are also interesting physics applied to particles when you swipe through them. In a nutshell, they are some attracting and repelling motions inspired by Chrome Experiment #500. For those effects, I used the same physics library called Traer.js, a JavaScript port of the original processing library of very intuitive APIs. One noticeable difference from the Google Creative Lab’s work is that Lens particles are presented in 3D, adding more realism while in motion.

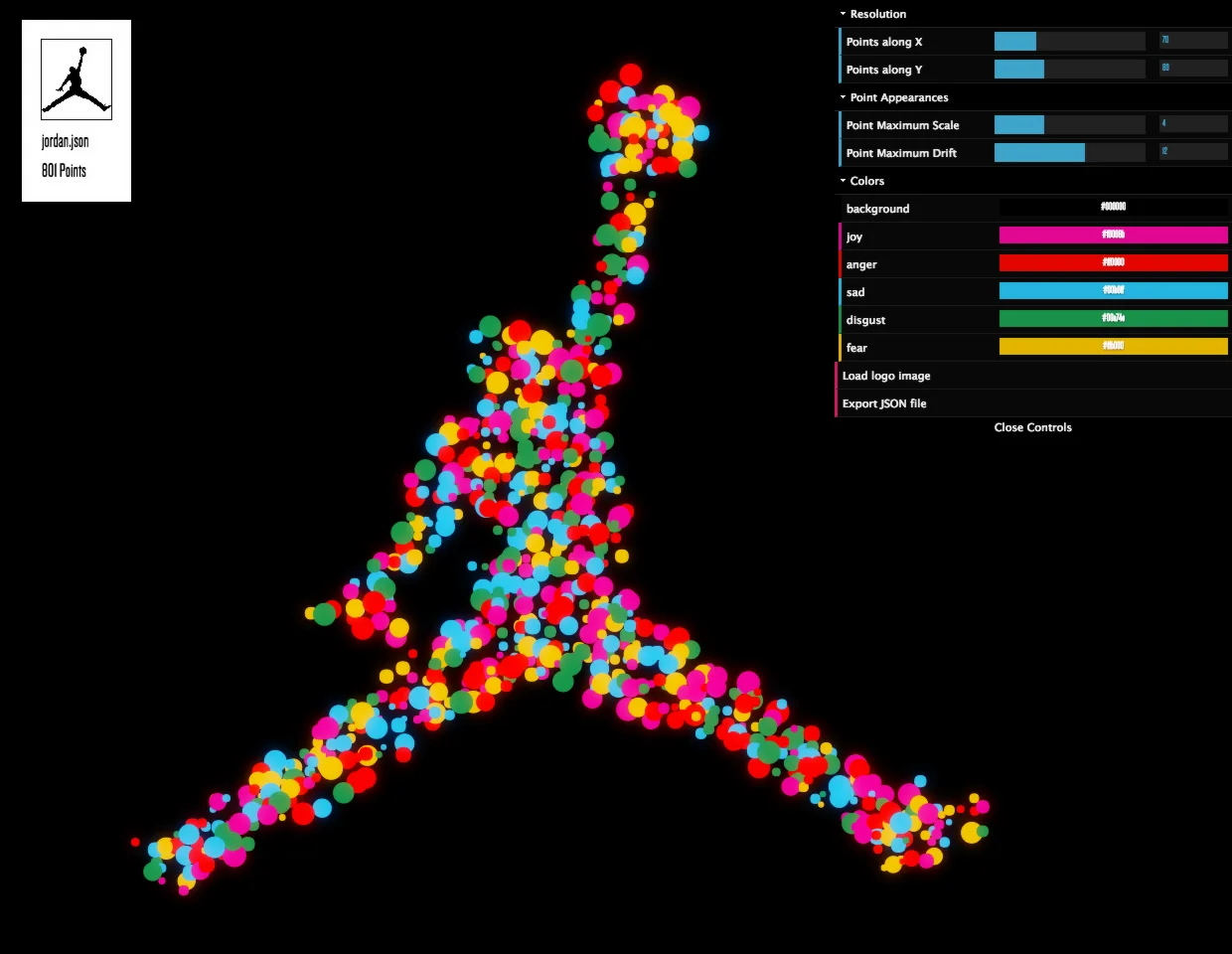

I advocate for the endeavor opening technology to creatives. I see it as an opportunity to stimulate creative input and set up team goals. The below image shows a tool that’s capable of generating JSON files for the brand shapes from transparent images. Supposedly the mysterious JSONs were meant to record the results as I proceeded with a different logo design. However, they were soon used as interchangeable design documents across teams as I instructed in a group meeting.